Announcing ReConstitution 2012

“Obama was listless up there”. “Ryan’s grasp of the facts is tenuous,” & “Biden was clearly unhinged.” — some quotes from talking-head political pundits in reference to the recent televised Presidential and Vice Presidential Debates. A lot of the ‘analysis’ coming out of the 24 hour news cycle is often little more than self-reinforcing hyperbole and subjective name-calling. But what if there was a way to cut through the candidates’ and news agencies partisan smoke screens and see what was really going on inside the minds of our would-be leaders? Was Obama really daydreaming during the 1st debate? Does Romney actually believe anything that comes out of his own mouth?

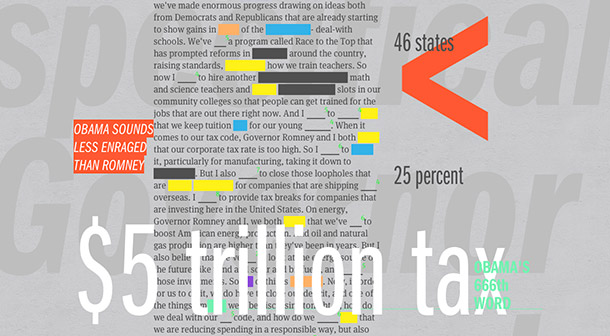

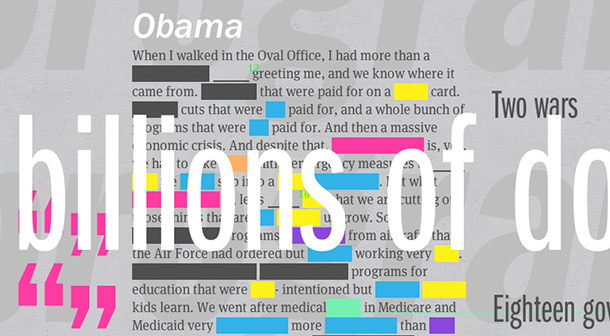

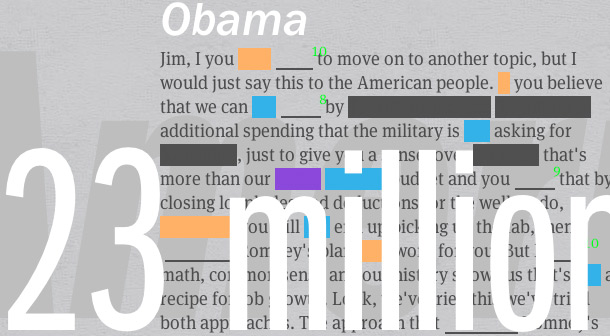

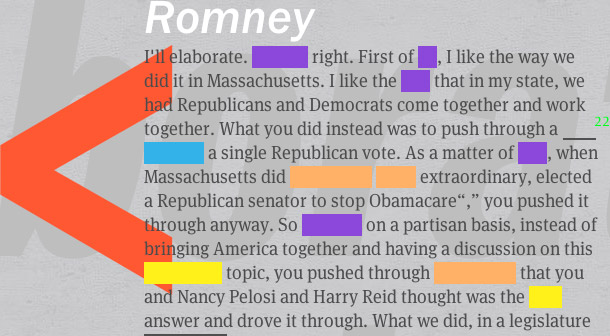

Lucky for you, ‘the People’, Sosolimited has built a better way to experience the Presidential Debates. While the Secret Service declined our request to hook Obama and Romney up to polygraph machines, we’ve done the next best thing and installed our patented Bullshit DetectorTM technology onto the internet. The result is ReConstitution, your go-to resource for live Presidential Debate psychoanalysis. Using some real ‘science’, a line or two of JavaScript, and a heavy dose of CSPAN-2, we’ve built a real-time forensic app, that cuts right to the chase and allows viewers to see deep into the psyches of the men competing to be Mr. USA.

This works because as much as we might try to mask our inner intentions and emotions, the language centers in our brain broadcast our inner secrets in subtle ways. Like a poker player’s ‘tell’, the character and frequency of the words we use reveal a lot about our inner psychological states. Liars tend to avoid talking about themselves in the first person. Depressed and suicidal people speak a lot more about their bodies and health than happy people.

By assembling a team of the world’s fanciest psychologists, linguists, programmers, and bartenders, we have put together behavioral models that allow our system to detect lies, narcissism, depression, and senility ten times better than any jackass in a suit can on CNN. Tune in to ReConstitution2012.com during the last two debates (Tuesday October 16th 9:00 PM EST & Monday October 22nd 9:00 PM EST) to see our system in action live, or anytime afterwards for a recap.

OUR MOTIVATION:

The introduction of HTML5 and recent explosion of open source JavaScript development has made it possible to do some incredible things on the web, and ReConstitution 2012 represents our first foray into using this space as an artistic medium. While a lot of graphics intensive experimenting has been done with WebGL, we wanted to explore the aesthetic possibilities of working directly with the DOM outside of the canvas. So with a backend built on Node.js using MongoDB, and a frontend built on Backbone.js using Backbone Boilerplate, we took up the challenge of translating some of our ideas from the 2004 and 2008 live debate remix performances into a form that could be experienced by a much more distributed audience. We spent a lot of time looking at typography, animation, and thinking about optimization for mobile devices.

In addition to the aesthetic and analytical goals of the project, we also saw it as a critique of traditional media coverage. Our “second screen” serves as a counterpoint to all of the Twitter feeds and ambiguous visualizations crammed into the TV frame during the debates. We figure, if there must be a spectacle, why not have fun with it and even offer some insight based on actual research along with it. It was also important to us that this piece did not aggregate and try to leverage social media content — we wanted to offer a view of the debates that people could explore and interpret individually, rather than just repeating and reinforcing catchphrases and social media trends.

TECHNICAL DETAILS:

Reconstitution 2012 is a web platform that uploads, processes, and distributes closed captioning data in real time. The platform consists of three pieces of software: a closed caption distributor app, the backend server, and the frontend client.

The closed caption (CC) distributor is a C++ application, built on OpenFrameworks, that runs locally in the Sosolimited studio. This application receives CC text that has been extracted from an analog cable signal by dedicated hardware, and sends it to the backend server. It also lets us, with the press of a button, tell the system who is speaking at any given moment in the debates.

The backend server is written in Javascript, using several libraries including Node.js. The server parses the incoming CC stream into words and sentences. It performs a lookup on each word into the Linguistic Inquiry Word Count (LIWC) database. The parsed language is also run through two Java apps—the Standford Named Entity Recognizer, to determine proper capitalization, and Sentistrength, to determine the emotional strength of each sentence. The words and sentences are tagged and stored in a MongoDB database. The server then sends each word, tagged with a collection of meta-data, to all connected clients.

The front-end is written in Javascript and is best viewed in Chrome or Safari. The front-end is built with a Backbone.js architecture, uses several libraries including BBB, Skrollr, and engine.io, and takes advantage of CSS3, HTML5, and Websockets.

PARTNERS:

This project was a collaboration with our longtime supporters at The Creators Project, a partnership between Vice and Intel. We also worked closely on the code with Tim Branyen and the badass team at Bocoup. The language analysis was tuned with help from Cindy Chung and James Pennebaker from the Department of Psychology at the University of Texas at Austin.